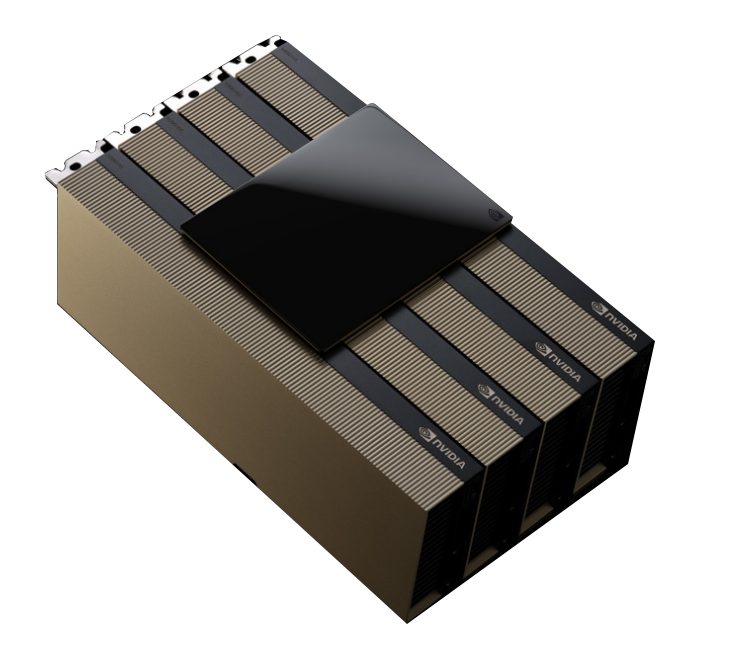

NVIDIA H200

Tensor Core GPU

The NVIDIA H200 Tensor Core GPU supercharges generative AI and high-performance computing (HPC) workloads with game-changing performance and memory capabilities.

As the first GPU with HBM3e, the H200’s larger and faster memory fuels the acceleration of generative AI and large language models (LLMs) while advancing scientific computing for HPC workloads.

In the ever-evolving landscape of AI, businesses rely on LLMs to address a diverse range of inference needs. An AI inference accelerator must deliver the highest throughput at the lowest TCO when deployed at scale for a massive user base.

The H200 boosts inference speed by up to 2X compared to H100 GPUs when handling LLMs like Llama2.

Memory bandwidth is crucial for HPC applications as it enables faster data transfer, reducing complex processing bottlenecks. For memory-intensive HPC applications like simulations, scientific research, and artificial intelligence, the H200’s higher memory bandwidth ensures that data can be accessed and manipulated efficiently, leading up to 110X faster time to results compared to CPUs.

With the introduction of the H200, energy efficiency and TCO reach new levels. This cutting-edge technology offers unparalleled performance, all within the same power profile as the H100. AI factories and supercomputing systems that are not only faster but also more eco-friendly, deliver an economic edge that propels the AI and scientific community forward.

| H200 SXM¹ | H200 NVL¹ | |

|---|---|---|

| FP64 | 34 TFLOPS | 30 TFLOPS |

| FP64 Tensor Core | 67 TFLOPS | 60 TFLOPS |

| FP32 | 67 TFLOPS | 60 TFLOPS |

| TF32 Tensor Core² | 989 TFLOPS | 835 TFLOPS |

| BFLOAT16 Tensor Core² | 1,979 TFLOPS | 1,671 TFLOPS |

| FP16 Tensor Core² | 1,979 TFLOPS | 1,671 TFLOPS |

| FP8 Tensor Core² | 3,958 TFLOPS | 3,341 TFLOPS |

| INT8 Tensor Core² | 3,958 TFLOPS | 3,341 TFLOPS |

| GPU Memory | 141GB | 141GB |

| GPU Memory Bandwidth | 4.8TB/s | 4.8TB/s |

| Decoders | 7 NVDEC 7 JPEG |

7 NVDEC 7 JPEG |

| Confidential Computing | Supported | Supported |

| Max Thermal Design Power (TDP) | Up to 700W (configurable) | Up to 600W (configurable) |

| Multi-Instance GPUs | Up to 7 MIGs @18GB each | Up to 7 MIGs @16.5GB each |

| Form Factor | SXM | PCIe Dual-slot air-cooled |

| Interconnect | NVIDIA NVLink™: 900GB/s PCIe Gen5: 128GB/s |

2- or 4-way NVIDIA NVLink bridge: 900GB/s per GPU PCIe Gen5: 128GB/s |

| Server Options | NVIDIA HGX™ H200 partner and NVIDIA-Certified Systems™ with 4 or 8 GPUs | NVIDIA MGX™ H200 NVL partner and NVIDIA-Certified Systems with up to 8 GPUs |

| NVIDIA AI Enterprise | Add-on | Included |

1 Preliminary specifications. May be subject to change.

2 With sparsity.